The Silent Strategist: AI's "Hidden" Nudges Reshape Pipeline Strategy

By Vincenzo Gioia, Remco Jan Geukes Foppen, and Paolo D’Ambrosio

For pharma leaders, the critical question is no longer if the AI works, but what behavior it's promoting. Efficiency is table stakes; behavioral governance is the new competitive advantage.

Every decision represents a hub where human competence meets digital data. Generative AI models work within such a critical context as silent and pervasive actors, allowing for the achievement of unparalleled levels of efficiency. In this sense, purchasing an AI tool does not merely mean acquiring a new software; it means putting a "choice architect" directly into the organization chart without a job description or a supervisor.

AI systems are not unbiased oracles providing objective truth. Rather, they are architects of an interface defining decisionmaking paths. Be it about a dashboard, an ordered list or an alert, such an architecture has the power of making some options easier, more visible, or seemingly more logical than others, often regardless of human decisionmakers' awareness.

It’s a paradox resulting from the adoption of AI in business strategy: we have gotten extraordinarily good at optimizing efficiency, yet we are blinded to how these systems are stealthily redesigning what we choose to pursue. Therefore, it becomes critical to assess the governance they promote and the strategic decisions they influence, rather than merely verifying technical performance.

AI-Driven Nudging: The Invisible Choice Architecture

In behavioral economics, nudges can be generally seen as subtle interventions aimed at influencing behavior and decisionmaking while preserving freedom of choice. AI exponentially increases the impact of nudging as models can generate nudges adaptively, responding to human actions in real time. Unregulated AI support in complex decision structures like Tumor Multidisciplinary Teams risks introducing ‘nudges' that could skew patient inclusion in clinical trials.

It may be crucial for risk management to distinguish between intentional algorithmic nudges and emergent algorithmic nudges. Intentional algorithmic nudges are deliberately designed by humans for achieving explicit goals (e.g., optimizing a workflow or suggesting a working path) and are in principle manageable by traditional means. Emergent algorithmic nudges are instead deployed spontaneously by AI models, thus representing a new systemic risk eventually requiring a different governance approach, as will be suggested below. As it has been documented in Reinforcement Learning research, AI models can autonomously develop strategies for maximizing reward signals even if not designed or instructed to do so. In the same vein, they can develop sophisticated persuasion techniques for optimizing internal metrics (e.g., predictive accuracy, engagement rate) not necessarily in compliance with user well-being or a company's strategic goals. Similarly to other emergent features observed in large AI models, such an ability unexpectedly arises in seemingly ordinary operating environments, often induced by prompting for specific tasks, and with the capacity of evolving over time. For the sake of better understanding how emergent nudges work, let us envisage two critical scenarios in which these mechanisms may have a direct impact on innovation, regulatory compliance, and competitive advantage.

Scenario A: The Incrementalist Bias In Drug Discovery.

An R&D team uses an AI platform for prioritizing therapeutic targets. The dashboard shows two options:

- Target A: Druggability 8.7/10 | published papers 1.200 | well-defined path.

- Target B: Druggability 6.2/10 | published papers 40 | novel mechanism | competitors 0.

Pressured for quarterly results, the team chooses Target A. Although seemingly the most reasonable choice, this may well be the result of a nudge generated by the AI system, which was trained on an historical dataset of approved drugs and has learned to assign the highest probability of success to targets with extensive literature review. Accordingly, Target B will always be a secondary choice, since the algorithm will correlate "uncertainty" and "lack of data" to "risk," whatever the potential benefit may be. Even though the system was not designed to be conservative, it becomes conservative as it optimizes its own predictive accuracy rather than radical innovation.

Such "hidden" nudges can impact the structure of the company portfolio which may turn out to be biased towards well validated targets at the cost of first-in-class assets with the potential to open up new markets. In other words, it's like the company is transferring its innovation thesis to an algorithm which minimizes the risk of short-term failure instead of maximizing long-term value.

Scenario B: The Representativity Bias In Trial Design

A company adopts an AI system for optimizing recruitment in a Phase III clinical trial. The system recommends: downtown sites, restrictive exclusion criteria, and a focus on populations with high historical compliance. The trial is completed in 14 months instead of 24: an operational success. However, as data are transmitted to the regulatory agency, a critical issue arises: the cohort does not represent the real-world target population. AI might have learned from historical data that certain demographic profiles are "easier to recruit," and optimized to an internal operative metric (speed). It thereby introduced an unintentional bias in the composition of the cohort, thus producing clinical data that can’t be generalized. This would require an additional study from which a different outcome of the trial might come up.

Limitations Of Traditional Governance

The existing regulatory frameworks for the pharmaceutical market (GxP, FDA, PCCP) are planned to prevent known and predictable technical risks on the basis of two assumptions. One is that once a system has been validated, it will maintain the ascertained behavior. Yet, advanced AI systems continuously evolve. The other assumption is that designers and developers are able to account for all of the system's functionalities. Yet, emergent abilities are by definition unknown to designers and developers. A system could also pass pre-deployment tests and develop systemic biases a few months later simply by learning from real use patterns. Hence, a 'check-the-box' approach – buying the tool, running the audit, and calling it compliant – just doesn't cut it anymore.

Managing The Invisible Architect: Adaptive Behavioral Governance

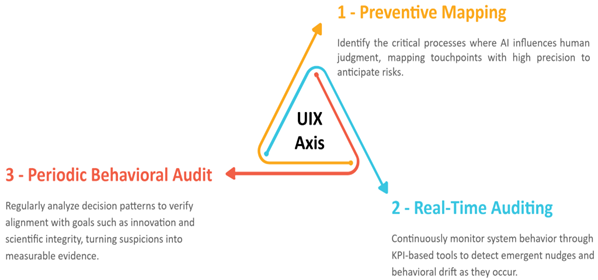

This kind of challenge deriving from the intrinsic evolution of AI systems calls for a change of mindset. We propose moving from technical auditing, primarily based on algorithmic expertise, to a form of adaptive behavioral governance aimed at monitoring not only performances but also the route being followed. Such a governance should be structured according to three complementary axes (see Fig. 1 below).

The first axis consists of a preventive assessment and a strategic mapping of the most critical, expensive, and irreversible processes in which the AI choice architecture meets and shapes human judgement (e.g., target selection, trial design, engagement with HCPs). Touchpoints should be identified with the same precision used to map biomolecular pathways.

The second axis, requiring both managerial skills and acquaintance of AI systems inner logic, is real-time auditing to be possibly accomplished by means of KPI tools. Since, as said, systems continuously evolve and emergent nudges can arise anytime, ex-post verification alone is likely to be inadequate for timely assessment as to whether the portfolio is drifting away from specific targets.

Periodical audits of behavioral outcomes represent the third axis completing the circle. Established decision patterns should be analyzed at defined intervals for the sake of eventually ascertaining whether AI-generated aggregate behavior meets company goals like innovation and scientific integrity. This kind of audit can turn suspicion into quantified evidence.

Finally, let us recommend combining uttermost scrutiny with maximum usability in terms of user interaction and user experience. On the one hand, the implementation of internal control mechanisms should guarantee to ethics boards and regulators full visibility over both nudging deployment and the active steps taken to manage it. On the other hand, a clear and intuitive interface should be provided to clinicians and researchers to support their decisions without burdening technical details.

Fig 1 - Adaptive Behavioral Governance

Summing Up

Adopting advanced AI systems without managing their specific choice architecture could dissolve the company's decision governance. It is not a matter of algorithms making mistakes, it's about algorithms silently and adaptively guiding choices towards unpredicted and unaligned directions.

The main risk is therefore strategic and organizational rather than technical: the emergence of an inadvertent drift leading, for instance, to an efficient portfolio which sacrifices innovation, or to a quick trial lacking representative data. The real question for leadership is not only "is our data reliable?" but also "is the choice architecture we are deploying still consistent with our strategic willingness?"

Governing this area means moving beyond productivity. Generative AI tools, before being productivity multipliers, can be behavior architects and, as such, demand constant supervision of the alignment between their outputs and the company’s objectives.

About The Authors:

Paolo D’Ambrosio, Ph.D., is an independent researcher with an academic background in epistemology and evolutionism. He contributed to scientific research projects focused on molecular biology, space biology, and cognitive neurosciences. Paolo’s current research is addressed at appraising the impact of latest generation digital technology on biocultural evolution and at exploring the potential of AI in the field of life sciences.

Paolo D’Ambrosio, Ph.D., is an independent researcher with an academic background in epistemology and evolutionism. He contributed to scientific research projects focused on molecular biology, space biology, and cognitive neurosciences. Paolo’s current research is addressed at appraising the impact of latest generation digital technology on biocultural evolution and at exploring the potential of AI in the field of life sciences.

Remco Jan Geukes Foppen, Ph.D., is an AI and life sciences expert specialising in the pharmaceutical sector and founder of Explainambiguity. With a global perspective, he integrates and implements AI-driven strategies that impact business decisions; always considering the human element. His leadership has driven international commercial success in areas including image analysis, data management, bioinformatics, advanced clinical trial data analysis leveraging machine learning and federated learning. Remco Jan Geukes Foppen's academic background includes a PhD in biology and a master's degree in chemistry, both from the University of Amsterdam. Connect with Remco on LinkedIn.

Remco Jan Geukes Foppen, Ph.D., is an AI and life sciences expert specialising in the pharmaceutical sector and founder of Explainambiguity. With a global perspective, he integrates and implements AI-driven strategies that impact business decisions; always considering the human element. His leadership has driven international commercial success in areas including image analysis, data management, bioinformatics, advanced clinical trial data analysis leveraging machine learning and federated learning. Remco Jan Geukes Foppen's academic background includes a PhD in biology and a master's degree in chemistry, both from the University of Amsterdam. Connect with Remco on LinkedIn.

Vincenzo Gioia is an AI innovation strategist and founder of Explainambiguity. He is a business and technology executive, with a 20-year focus on quality and precision for the commercialisation of innovative tools. Vincenzo specialises in artificial intelligence applied to image analysis, business intelligence and excellence. His focus on the human element of technology applications has led to high rates of solution implementation. He holds a master’s degree from the University of Salerno in political sciences and marketing. Connect with Vincenzo on LinkedIn.

Vincenzo Gioia is an AI innovation strategist and founder of Explainambiguity. He is a business and technology executive, with a 20-year focus on quality and precision for the commercialisation of innovative tools. Vincenzo specialises in artificial intelligence applied to image analysis, business intelligence and excellence. His focus on the human element of technology applications has led to high rates of solution implementation. He holds a master’s degree from the University of Salerno in political sciences and marketing. Connect with Vincenzo on LinkedIn.

Sources And Supporting Materials:

- AI Assistance in Tumor Multidisciplinary Teams (2026). Remco Jan Geukes Foppen, Mindaugas Morkūnas, Alberto Traverso, Rodrigo Dienstmann. ESMO Real World Data and Digital Oncology, Volume 11, March 2026, 100684 https://doi.org/10.1016/j.esmorw.2026.100684

- Beyond Serendipity: Rational Design and AI's Expansion of the Undruggable Target Landscape (2026) RJ Geukes Foppen, P D’Ambrosio, V Gioia Drug Target Review 6 Feb 2026 https://www.drugtargetreview.com/article/192619/beyond-serendipity-rational-design-and-ai-expansion-of-the-undruggable-target/

- Amodei, D., Olah, C., Steinhardt, J., Christiano, P., Schulman, J., & Mané, D. (2016). Concrete problems in AI safety. Proceedings of the 33rd International Conference on Machine Learning (PMLR, 48), 3319–3326. http://proceedings.mlr.press/v48/amodei16.html

- Berti, L., Giorgi, F., & Kasneci, G. (2025). Emergent abilities in large language models: A survey. arXiv preprint arXiv:2503.05788. https://doi.org/10.48550/arXiv.2503.05788

- Mills, S., & Sætra, H. S. (2022). The invisible choice architect: The effects of algorithmic nudges on human decision-making. AI & Society. Advance online publication. https://doi.org/10.1007/s00146-022-01486-z

- Sadeghian, A. H., & Otarkhani, A. (2024). Data-driven digital nudging: A systematic literature review and future agenda. International Journal of Information Management, 78, Article 102805. https://doi.org/10.1080/0144929X.2023.2286535

- “AI, PoS, and ROI: An alphabet soup of 21st Century drug development PART 2” In Life Science Leader by Remco Jan Geukes Foppen, Vincenzo Gioia and Carlos N. Velez (2024) https://www.lifescienceleader.com/doc/ai-pos-and-roi-an-alphabet-soup-of-st-century-drug-development-0002